Welcome to Today’s AIography!

Quick note before we start: I'm trying something new on Thursdays. One topic, one argument, more room to think out loud. Tuesday keeps the multi-story sweep. Let me know how this lands for you.

For the past two years, every AI-video headline has been about generators. Sora. Runway. Kling. Veo. Seedance. Last week it was Doug Liman's $70 million AI-assisted feature, shot on a gray screen in a converted West London car showroom with 55 AI artists in post. The story everyone tells themselves about AI in film is the generator story, the tool that makes the pictures. The assumption baked into all of it: AI comes for the generator first, and the editor last.

This week, that story got turned around.

In today’s AIography:

The scale of the shift

Eddie AI's Night Shift

Search stops being a shuttle

The existing tools got the memo

What this does not mean

The invitation

What I'm watching this week

Essential tools

Read time: About 7 minutes

WHAT I’M THINKING ABOUT

The Scale of the Shift

238 AI-focused exhibitors filled NAB 2026's West Hall — up 82% from last year's 131. Google took a full booth for the first time ever. The AI Innovation Pavilion doubled, spilling from one hall into two. Microsoft and Google Cloud both landed on the main-stage keynote schedule.

And the tools pulling real attention from the editors walking the floor weren't the generators.

They were the ones aimed at the cutting room. Eddie AI shipped a product called Night Shift that takes your raw footage at the end of a shoot day and hands you a rough cut on your timeline by 8:00 AM the next morning. TwelveLabs demonstrated Pegasus 1.5, an AI model that lets you search a 40-hour documentary shoot by typing "the moment she laughs and looks out the window." Moments Lab built conversational search directly into Avid Media Composer and DaVinci Resolve panels. And the three biggest non-linear editing tools in the world — Adobe Premiere, DaVinci Resolve, and Avid Media Composer — all pushed major AI infrastructure updates in the same 72-hour window.

I have been at the Avid, and the Moviola before it, and the Steenbeck before that, for 40 years. I know the difference between a feature demo and a tool someone will actually open on Tuesday morning. Most of what hits the AI press doesn't pass that test. This week did.

Here's what I saw, and what I think it means for anyone watching this industry from the sidelines wondering when it becomes their problem.

Eddie AI's Night Shift

Eddie AI (CEO Shamir Allibhai) launched version 3 on April 15 and demoed it live at booth N1672 on the NAB floor all week. The product I want to tell you about is called Night Shift.

Here's how it works. At the end of a shoot day, the assistant editor drops the raw footage into the desktop app — Mac or PC. Or, and this is the part that tells you Allibhai has spent time around actual production crews, the assistant sends a text message with a cloud link (Frame.io, Google Drive, Dropbox) to a phone number: +1 650 444 9211. Eddie ingests everything overnight. By 8:00 AM Pacific the next morning, a rough cut is waiting on the timeline. Interviews separated from B-roll. Multicam synced. Clips logged. A first assembly ready to open in Premiere Pro, DaVinci Resolve, or Final Cut Pro.

For documentary edits, B-roll contextual placement is now generally available. You can feed Eddie a story outline and get back up to a 40-minute narrative rough cut built around that guidance.

Pricing: a free tier with pay-as-you-go credits, Pro at $200 a month, and higher tiers for higher volume.

Let me tell you what that means to someone who has actually sat in the assistant editor's chair.

The first two hours of every assistant editor's day are prep. Load the previous day's media. Sync the multicam. Log the interviews. Make a stringout so the editor has something to scrub through when they come in at 10:00 AM. That work is not creative. It is necessary. On a medium-sized documentary with a four-camera interview setup and a full crew day of B-roll, those two hours can stretch to four. On a bad day, six. And it's work every assistant editor does on top of the actual editorial requests coming from the editor and the producer.

Night Shift takes that two-to-six hours and moves it out of the assistant editor's morning. Not all of it. Not perfectly. Not without a human checking the result. But a large portion of the purely mechanical work, the part nobody went to film school to do, happens while the building is empty.

The text message detail matters more than it sounds. Producers don't email assistants. They text. "Can you grab me a selects bin from yesterday's interview?" is a text message. The fact that Eddie responds on that same channel is a small thing that tells you Allibhai shipped a product for the way the work actually gets done, not the way a software company wishes it got done.

This is not the generator story. This is the assistant-editor story. It shipped this week. And it works on the three editing systems every working professional already uses.

Search stops being a shuttle

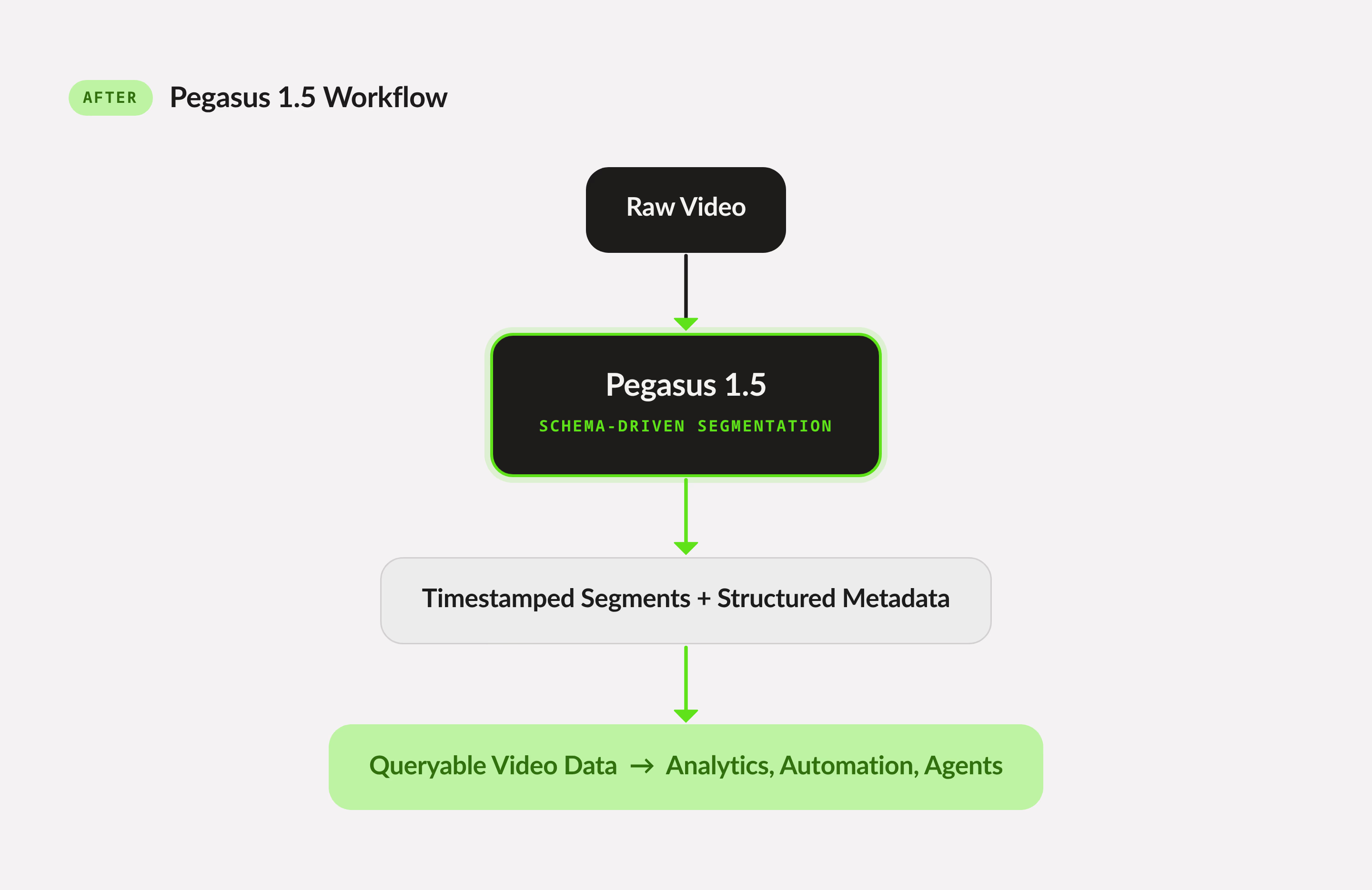

The other big editor-facing story this week came from TwelveLabs. On April 20, they announced Pegasus 1.5, a video-understanding model that discovers temporal boundaries in footage and extracts structured metadata to match a schema the customer defines.

In plain language: you tell Pegasus what you care about (who is speaking, what emotion is on the face, what object is in frame, what the camera is doing), and it produces time-coded metadata for every clip you feed it. TwelveLabs says the model is already in production with a major broadcast network.

Alongside Pegasus, they launched Rodeo, an AI agent layer that sits on top of the metadata and lets a creator search, edit, and assemble footage using natural language, with no technical integration required. And in the same announcement, a partnership with Autodesk to bake TwelveLabs' video understanding into Flow Capture, Autodesk's digital-dailies-and-review software.

Moments Lab is playing the same hand from a different angle. Their Discovery Agent, shown at NAB with native panels for Avid Media Composer and DaVinci Resolve, lets you search a media library by typing what you're looking for. It returns clips with precise in and out points, no manual tagging required.

Here's the practitioner frame: assistant editors spend a significant share of their week looking for moments. Stringouts, selects bins, and the old-fashioned workflow of scrubbing through footage with the editor saying "stop — back up — there." That process did not scale well to modern shoot volumes. It scales even worse when you're searching across multiple projects or archival footage.

Conversational search changes the math. You stop shuttling and start asking. The Autodesk Flow Capture integration matters because it tells you this has moved out of the startup-demo stage and into the software that established post houses already buy. The "major broadcast network" line matters because broadcasters don't run unproven tools in production.

Search, at least for editors, is about to stop being a shuttle.

The existing tools got the memo

In the same 72-hour window this week, the three non-linear editing tools that dominate professional post-production all pushed major AI announcements.

Adobe launched Premiere Color Mode as a public beta, a ground-up color grading environment built inside Premiere, aimed squarely at DaVinci Resolve's territory. Firefly AI Assistant, Adobe's new creative agent, was announced alongside, promising multi-step task orchestration across Premiere, Photoshop, Lightroom, Illustrator, Firefly, and more. Kling 3.0 and Kling 3.0 Omni shipped inside Firefly Video Editor with 30-plus other generative video models available as a routing layer.

Blackmagic shipped DaVinci Resolve 21 the day before, including a new Photo page (a direct shot at Adobe Lightroom), broader AI upgrades across the application, and several new features for facility-scale work.

Avid announced a multi-year partnership with Google Cloud to embed generative and agentic AI directly into Media Composer, along with a new cloud-native platform called Avid Content Core for unified asset management across facilities. Google's Vertex AI and Gemini models get a path into the NLE that cuts virtually every major studio film and broadcast program.

The three biggest names in professional editing — Adobe, Blackmagic, Avid — all moved on AI infrastructure in the same week. Not generation features. Infrastructure. That's the part worth noticing.

When the tools working professionals use every day rebuild their workflow around AI in a coordinated 72-hour window, something has shifted. The question stopped being whether AI reaches post-production. It's already there.

What this does not mean

I want to be honest about what I'm saying and what I'm not.

AI is not replacing the editor. Not this week. Not in any serious way on the horizon. The creative cut, the decision about which take, which line, which moment, which rhythm, is still a human job, and will be for a long time. I have made my living on that decision for many years. Nothing at NAB 2026 suggests that decision is being outsourced.

What IS being replaced is a specific, narrow category of work. The prep. The log. The search. The overnight grind. The hours before the editor arrives that the assistant editor has always been responsible for. That work is not creative. It is necessary. It's time-consuming. And it is exactly the kind of structured, repetitive, pattern-matching work that current AI tools are actually good at.

For a working assistant editor, this is not a small shift. A significant portion of an assistant editor's daily work just became something that can happen overnight while the building is empty. For senior editors, the equation is different. The prep you used to rely on an assistant editor to do is now available from a tool for $200 a month. Which means the assistant editor role, as it has been practiced, is going to change.

I'm not saying that to alarm anyone. I'm saying it because I've lived through this kind of shift before. When non-linear editing went mainstream, the workflow of film rooms changed fundamentally, but the editors didn't go away. The editors adapted. The roles around them changed. The craft endured.

This feels like the same kind of moment. The craft is not disappearing. The roles around it are being rebuilt.

The working post professionals who learn these tools first, not because they're evangelists, but because they're practical, will be the ones running cutting rooms two years from now. That's the honest read.

The invitation

If you're watching all of this from the sidelines, you've opened ChatGPT once, maybe poked at Runway, and you keep reading about AI in film and wondering when it becomes your problem, this is the week to pay attention. Not with panic. And not because the generators are about to replace you. They're not.

Pay attention because the assistant editor's job is going to look different by the end of the year. Because Eddie AI is $200 a month today, and it runs on the three NLEs you already use. Because TwelveLabs is already in a broadcast network's production pipeline. Because Adobe, Blackmagic, and Avid all rebuilt around AI infrastructure in the same week.

You don't have to become an AI expert. You have to sit down in front of one of these tools, on a small personal project, and see what happens. See what it gets right. See what it gets wrong. Form your own opinion. That opinion, informed by actual use and held by somebody with craft experience, is going to be more valuable in every room you walk into for the next five years than any amount of reading about it.

This week, AI finally showed up where we work. Not where the marketing copy wanted it to be. Where we actually work.

The next move is on us.

Enjoy your weekend,

— Larry

What I'm watching this week

SAG-AFTRA resumes AMPTP talks April 27 under a media blackout. The AI language from the WGA tentative deal becomes the precedent being negotiated against. Three days after the WGA ratification vote closes.

WGA ratification vote closes Friday April 24. Dissent from the World Socialist Web Site and other labor-critical voices argues the AI protections are actually a paid-through licensing regime, not a ban. Worth reading both sides before you make up your mind.

Bitcoin: Killing Satoshi hits the Cannes sales market in May. Doug Liman's $70 million AI-assisted feature will be the first time international buyers see an AI-native-post budget against a traditional comp. The math changes after that screening.

Post-NAB dust. A lot of what got announced this week will quietly ship, quietly die, or quietly get acquired. Which is which will start to become clear over the next 30 days.

Sources

Eddie AI Night Shift: RedShark News · CineD (debut) · CineD (hands-on) · heyeddie.ai

TwelveLabs Pegasus 1.5 + Rodeo: PR Newswire

Moments Lab Discovery Agent: Videomaker

NAB 2026 AI Pavilion scale: TV Technology · NAB Show Press

Adobe NAB announcements: Adobe Blog

DaVinci Resolve 21: Engadget · postPerspective

Avid + Google Cloud: LA Times · PR Newswire / Avid

WGA dissent coverage: World Socialist Web Site

ESSENTIAL TOOLS

AI Filmmaking & Content Creation Tools Database

Check out the Alpha version of our AI Tools Database. We will be adding to it on a regular basis.

Got a tip about a great new tool? Send it along to us at: [email protected]

You think 4x faster than you type. Your IDE should keep up.

Wispr Flow lets you dictate prompts, acceptance criteria, and bug reproductions inside Cursor or Warp — with automatic file name and variable recognition. Say user_id, get user_id. Say useEffect, get useEffect.

Paste directly into GitHub, Jira, or Linear. Give coding agents the full context they need without typing a novel.

89% of messages sent with zero edits. Millions of developers use Flow daily, including teams at OpenAI, Vercel, and Clay. Free on Mac, Windows, and iPhone.

What did you think of today's newsletter?

If you have specific feedback or anything interesting you’d like to share, please let us know by replying to this email.

AIography may earn a commission for products purchased through some links in this newsletter. This doesn't affect our editorial independence or influence our recommendations—we're just keeping the AI lights on!